Human-Centered AI

Snapdragon Partners incubates startups, builds breakthrough tools, and provides strategic consulting for organizations embracing human-centric AI & AI‑transformation.

What We Do

We help organizations harness AI in ways that amplify human capability — not replace it.

Startup Studio

We build AI-driven products internally, from concept to launch. Each venture is selected for its potential to put humans at the center of a meaningful AI-powered experience.

Maestro App Factory

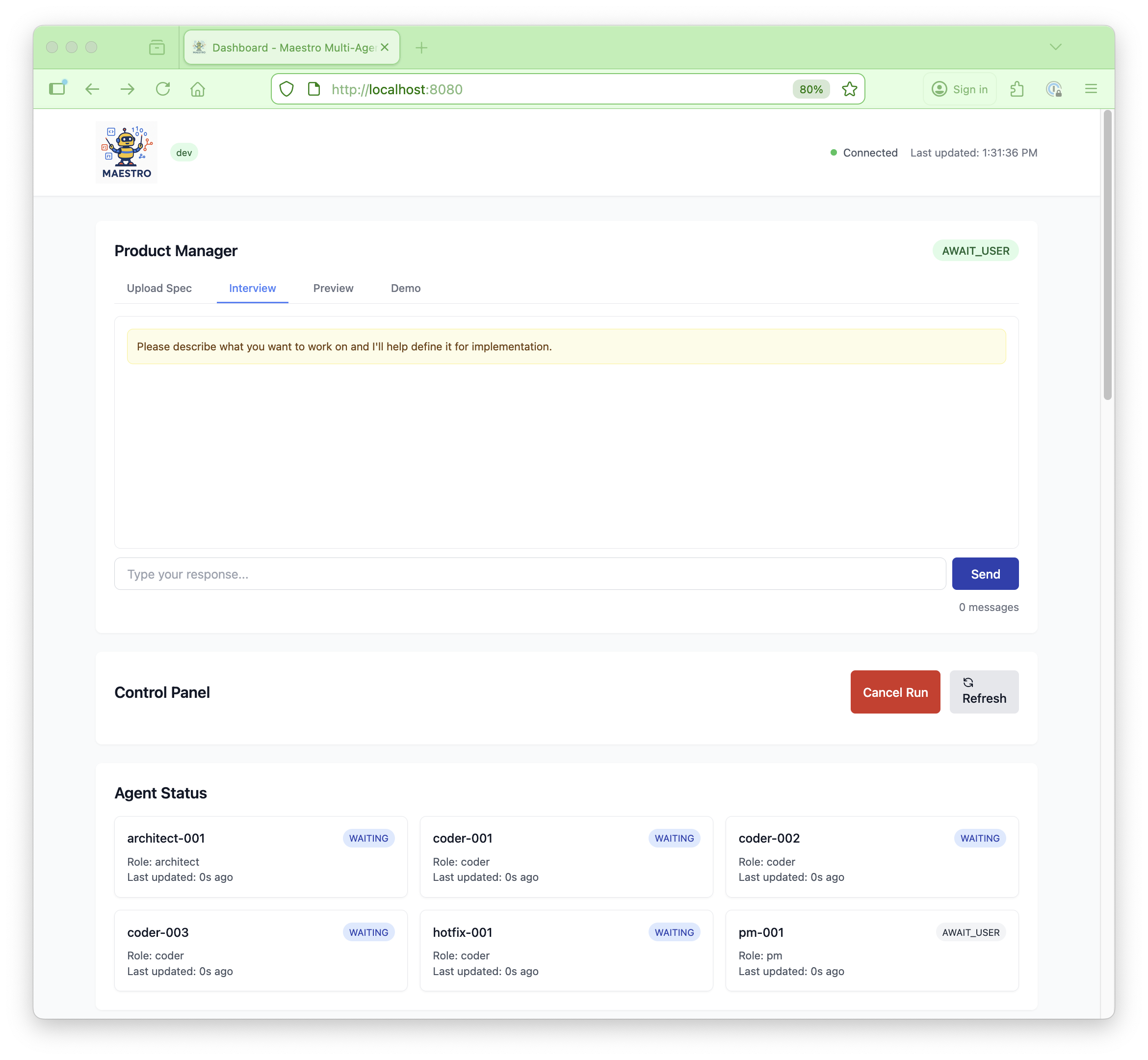

Our open-source agentic development tool organizes AI agents like high-performing human teams — with a Product Manager, Architect, and Coders — to build full applications using structured workflows and professional software engineering principles.

Snapdragon Partners offers premium support and integration packages for Maestro App Factory™. Contact us for details.

Available for Mac and Linux. Free and open source.

AI Transformation Consulting

We work with engineering leaders and product organizations to unlock the potential of AI-assisted development. Our engagements range from hands-on technical builds to organizational transformation.

Get in Touch

Whether you're exploring AI transformation or want to learn more about our work, we'd love to hear from you.

Other Ways to Reach Us

Prefer a different channel? Connect with us on LinkedIn or explore our open-source work on GitHub.

LinkedIn — Snapdragon Partners GitHub — SnapdragonPartners